The Original 80KB Utility

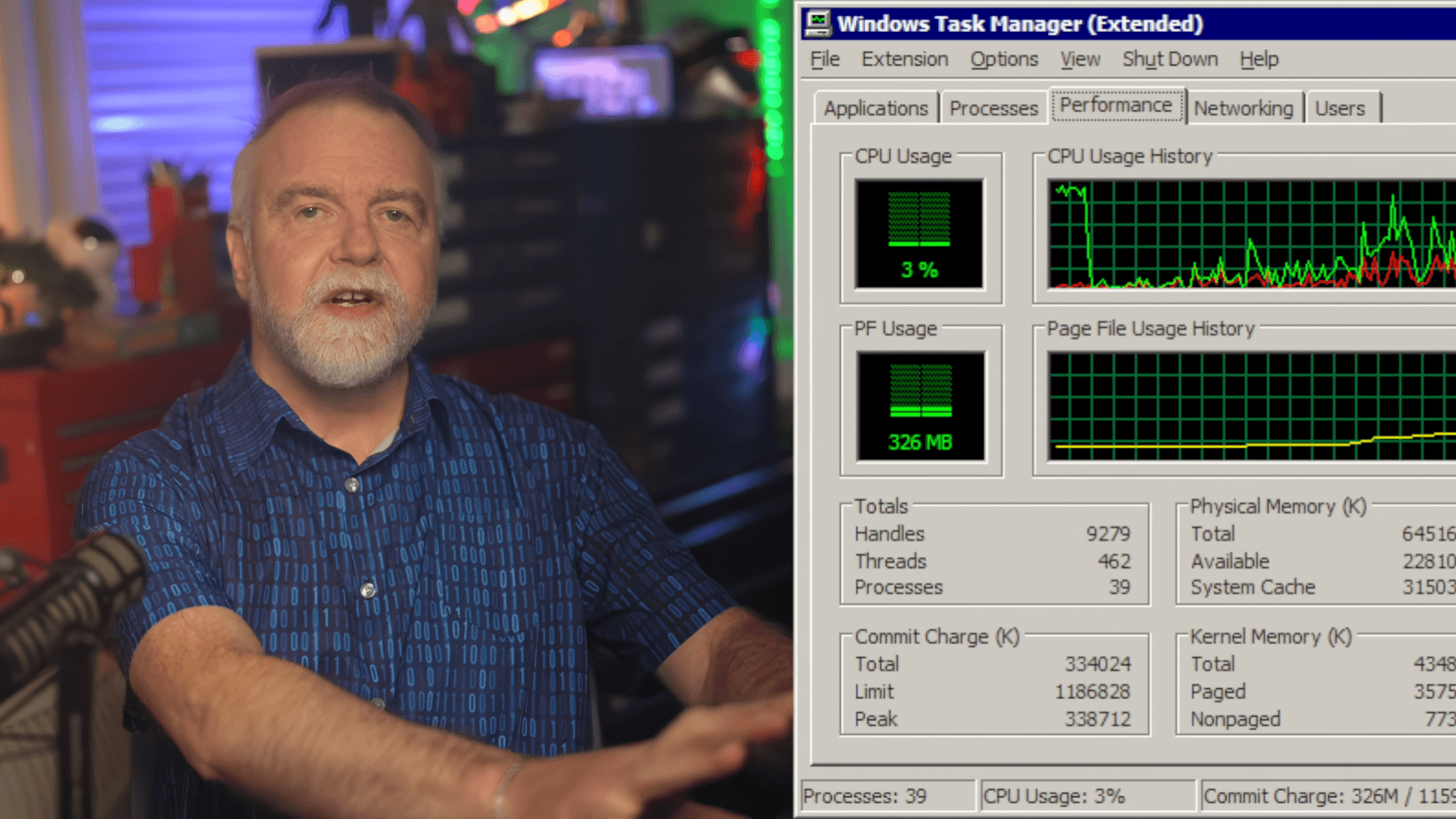

The modern operating system is a complex tapestry of interconnected services, layers of abstraction, and features designed for maximum user convenience. Yet, when examining foundational utilities like the Windows Task Manager, the sheer scale of modern software development often obscures the elegant, minimalist engineering that made these tools functional in the first place. Recently, Dave Plummer, an engineer credited with numerous iconic Windows features, shed light on the original architecture of the Task Manager, revealing a technical disparity that is both staggering and profoundly instructive. According to his account, the initial version of the utility was a mere 80KB. This figure stands in dramatic contrast to the current Task Manager, which occupies a significantly larger footprint, estimated at around 4MB. This difference is not merely a matter of file size; it represents a fundamental shift in engineering philosophy, moving from absolute resource constraint to feature-rich abundance.

Plummer’s primary concern when developing the utility was not feature parity or future-proofing; it was survival. In the era of limited hardware, the Task Manager needed to remain crisp and responsive—a critical lifeline—even if the rest of the PC environment had completely failed or hung. This necessity dictated a ruthless focus on efficiency. He emphasized that every single line of code, every memory allocation, carried a measurable cost. As he stated, “Every line has a cost; every allocation can leave footprints. Every dependency is a roommate that eats your food and never pays rent.” This philosophy forced him to build a tool that was maximally functional while being minimally invasive. The resulting 80KB utility was a masterclass in constrained optimization, prioritizing core stability over expansive capability.

The technical depth of this historical perspective goes beyond simple file size comparison. It speaks to the engineering discipline required to build software that must function reliably under duress. The original design was inherently resilient, built to be the last resort when the system was failing. This foundational requirement meant that the code had to be lean, direct, and highly predictable in its resource consumption. It was a utility built not for the average user experience, but for the worst-case scenario, making it incredibly robust despite its diminutive size.

Modern Bloat vs. Core Stability

The contrast between the original 80KB design and the current 4MB iteration highlights a pervasive tension in modern software development: the trade-off between feature bloat and core stability. As operating systems have evolved, they have absorbed layers of comfort, future-proofing, and user-facing enhancements. While these additions provide unparalleled convenience, they inevitably increase the resource overhead, creating a systemic drag that the original architects could never have anticipated. Plummer’s anecdote serves as a potent reminder that complexity, while desirable for the user, is often the greatest enemy of performance.

This trade-off is most evident in how the Task Manager handles basic functions, particularly its startup sequence. The modern utility, while highly functional, operates under a more generalized model. In contrast, Plummer detailed a sophisticated, almost conversational approach to checking for existing instances. Instead of simply checking if another instance exists and activating it, the original utility was designed to send a private message to the existing process. This action was not just a check; it was a confirmation request. If the existing Task Manager instance failed to respond, the system correctly assumed it was frozen and launched a new instance to help the user escape the rut. This level of proactive, communicative engineering is a hallmark of highly optimized, resource-aware code.

Understanding this shift requires recognizing that modern development often favors the "framework first" approach. Developers start with a comprehensive framework, add layers of comfort, and then are surprised when the resulting utility consumes hundreds of megabytes. The original approach, conversely, was highly surgical. It solved a specific, critical problem—system recovery—using the absolute minimum resources necessary. This contrast provides a valuable lesson for developers and system architects alike: the most powerful features are often those that are invisible, efficient, and deeply integrated into the operating system's core functionality.

The technical sophistication of the original startup check can be broken down into key engineering principles:

- Proactive Health Check: Instead of passive existence checks, the system actively queries the running process for a positive response.

- Failure Assumption: Silence from the existing instance is treated as a failure state, triggering a recovery mechanism.

- Minimal Overhead: The entire process is designed to execute with minimal CPU or memory spikes, ensuring the check itself doesn't contribute to system slowdown.

Implications for System Performance Optimization

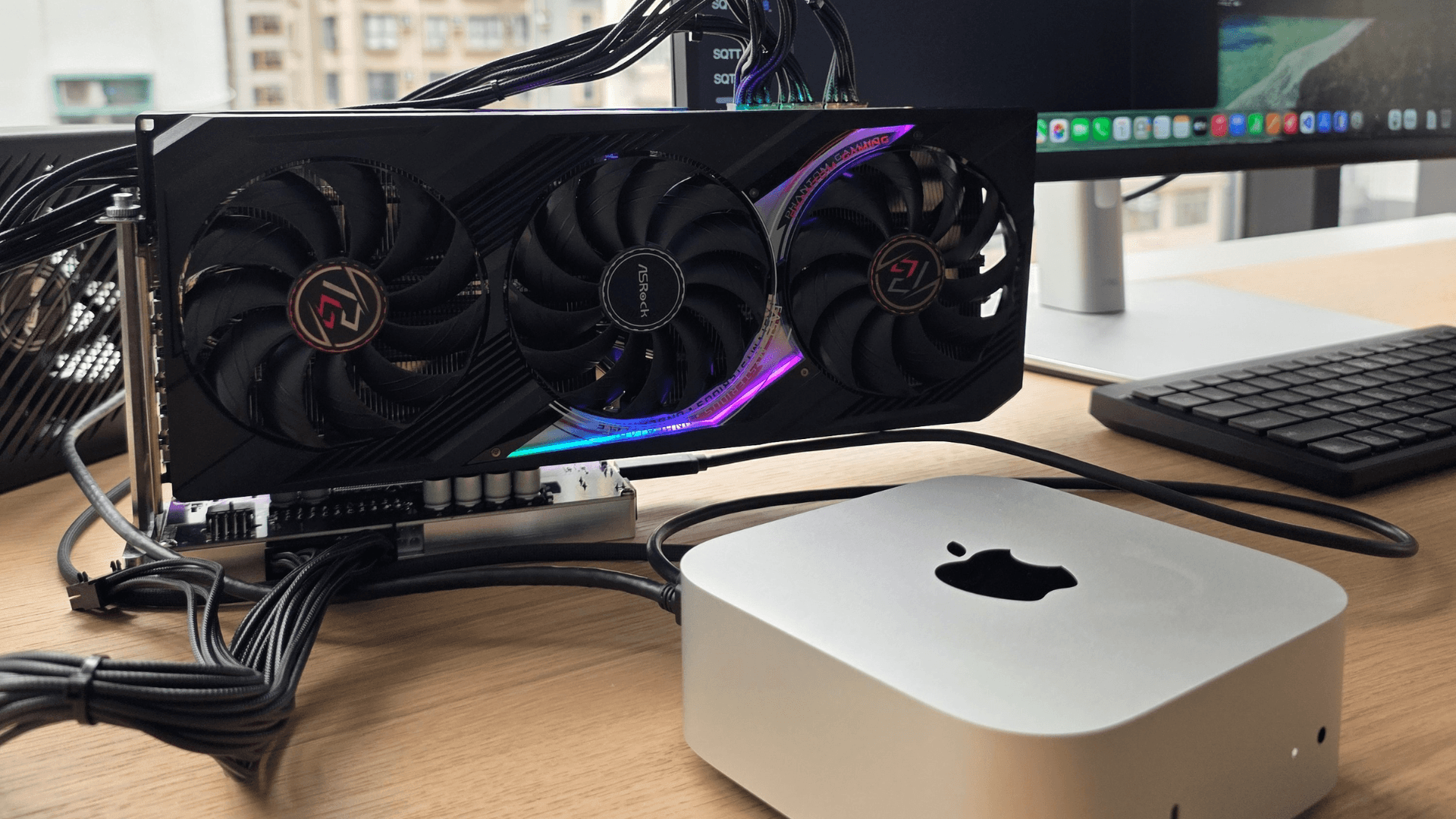

For players and power users, the revelation of the Task Manager’s origins is more than a historical curiosity; it is a critical lens through which to view modern system performance. The underlying principle—that every line of code has a cost—remains the most important lesson in computing. As gaming and professional applications continue to demand ever-higher levels of graphical fidelity and processing power, the operating system's foundational utilities must maintain peak efficiency to prevent bottlenecks.

The core takeaway for the gaming community is the importance of recognizing the difference between *feature richness* and *system efficiency*. While modern OS updates add incredible functionality, users must remain aware of how these additions might impact the resource budget. The original 80KB Task Manager was a testament to engineering minimalism, proving that maximum utility does not require maximum size. This principle applies to everything from background services to driver optimization.

Furthermore, the advanced startup check illustrates a concept crucial to high-performance computing: graceful failure handling. A system that can detect when a critical utility is frozen and automatically initiate a recovery sequence is inherently more stable than one that simply crashes or hangs. This level of engineering foresight is what separates a merely functional operating system from a truly reliable, high-performance platform.

The most important unresolved signal derived from Plummer's account is the ongoing tension between convenience and efficiency. As hardware continues to become exponentially more powerful, the temptation for developers to add more features—more layers of comfort, more layers of future-proofing—will only grow. The challenge for the industry, and for future OS development, is to maintain the discipline of the original engineers: to build the most powerful tools using the fewest possible resources. This commitment to foundational efficiency is what ensures that the operating system remains a reliable, high-speed platform for demanding applications like modern gaming.

Search intent focus: Veteran Microsoft engineer says original Task Manager was only 80KB so it could run smooth

Confirmed details first, useful context second. This is the quickest path to the source trail and the next pages worth opening.

Source date: April 12, 2026