Imagine getting access to the world’s most sophisticated artificial intelligence for a 90% discount, only to realize your most private data is the actual currency. A shadow economy is thriving in the gaps of international tech restrictions, and it is hitting the biggest names in the industry with surgical precision. This is not just a story about cheap software; it is a massive security breach where user data is harvested to train rival models in a high-stakes geopolitical race.

What this means for players and developers: The integrity of the AI tools you use depends on the security of the API. When these "transfer stations" bypass official channels, they create a massive privacy vacuum that feeds directly into the development of competing, state-sponsored models without your consent.

An investigation by Zilan Qian, a researcher at the Oxford China Policy Lab, has pulled back the curtain on a sophisticated API proxy scheme currently operating within China. This illicit network relies on "transfer stations" that resell access to Anthropic’s Claude models. While the official cost of these high-frontier models can be prohibitive for some, these grey-market vendors are offering the same compute power for as low as 10% of the standard retail price. It is a classic black-market undercut, but the technical overhead suggests something much more organized than simple piracy.

Oxford Researcher Exposes Claude Transfer Stations

These networks do not hide in the dark corners of the internet. Instead, they operate with surprising visibility across mainstream platforms like GitHub, Taobao, and Telegram. By leveraging these public-facing sites, the API proxy scheme reaches thousands of developers who are looking for a workaround to regional restrictions or high costs. For many users in regions where Anthropic has not yet announced an official release date, these proxies represent the only way to stay competitive in the rapidly moving AI landscape.

The Oxford China Policy Lab findings indicate that these transfer stations act as a middleman. When a user sends a prompt to the proxy, the proxy forwards it to the actual model—often using stolen credentials—and then passes the response back. This creates a seamless experience for the end-user, who may not even realize they are participating in an illicit data harvesting operation. The low cost is the bait, and the sheer scale of the operation suggests a highly organized infrastructure behind the scenes.

Within China's grey market for fallback seeds, these proxies serve as a vital, if illegal, artery for tech startups. Because official access is often blocked or heavily monitored, these "fallback" options become the primary method for local developers to test their own applications against Western frontier models. However, the convenience comes with a heavy price tag regarding security and intellectual property.

Shadow Networks Target Anthropic Claude Models

The operational tactics and data theft involved in these schemes are remarkably efficient. To maintain such a low cost of access, these networks utilize a mix of stolen credentials and model substitution. In some cases, the proxy might even swap a high-end model for a cheaper, less capable one during peak times to save on costs, all while charging the user for the premium experience. This "bait and switch" is common in the grey market, but it is the data harvesting that poses the real threat.

Crucially, these proxy networks are not just reselling access; they are actively harvesting every prompt and output that passes through their servers. In the world of AI development, this data is gold. These harvested logs are then bundled and sold as high-quality training data for other AI companies. This creates a cycle where Western intellectual property is used to train the very models designed to compete with them, effectively subsidizing the development of rival technology through stolen user interactions.

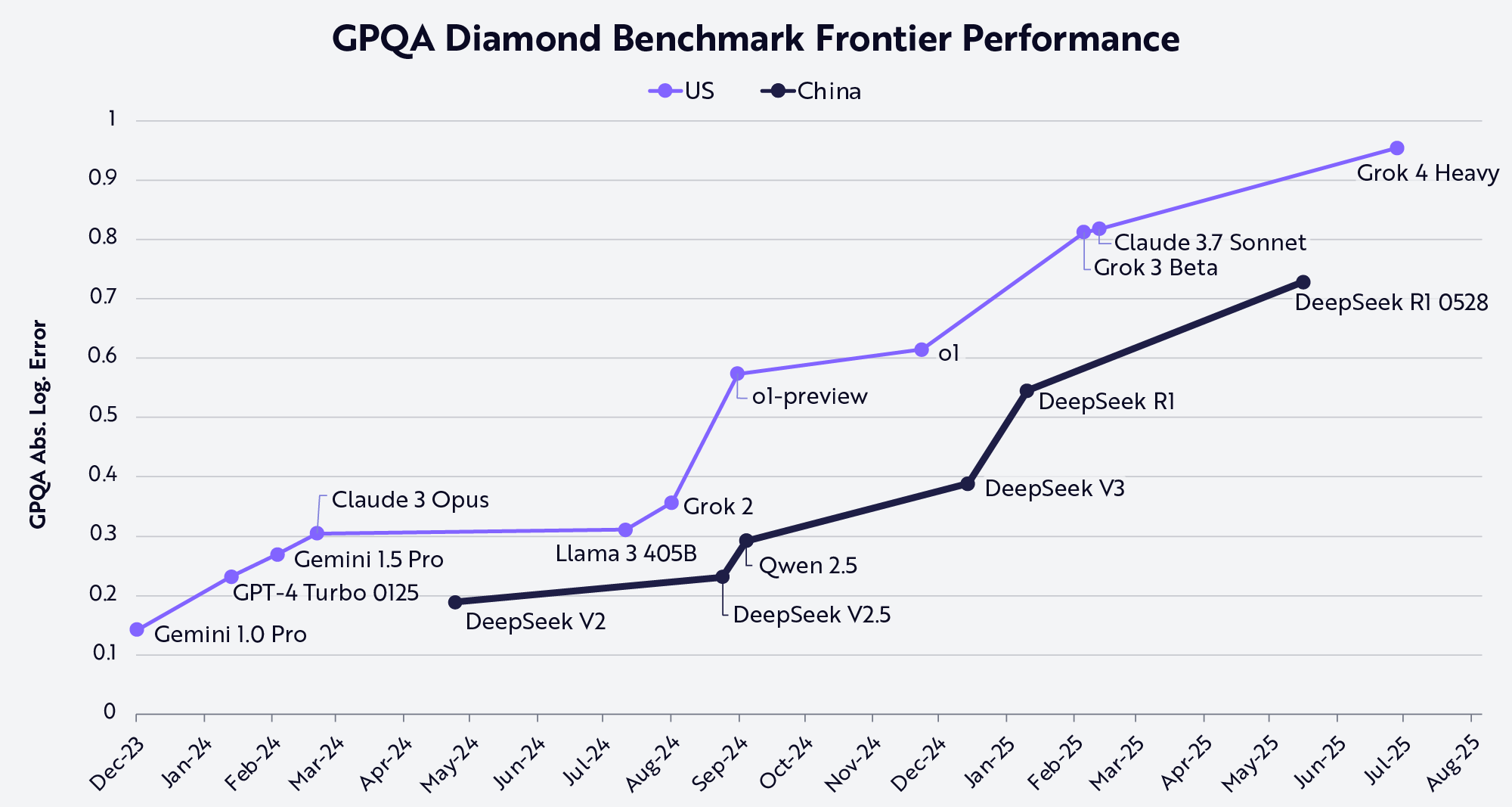

This systematic theft goes beyond simple account flipping. It is a form of industrial-scale distillation. By watching how a frontier model like Claude 3 responds to complex queries, rival labs can "distill" that intelligence into their own smaller, cheaper models. This allows them to bridge the gap in model performance without having to invest the billions of dollars required for original research and development.

White House Issues Critical Industry Warnings

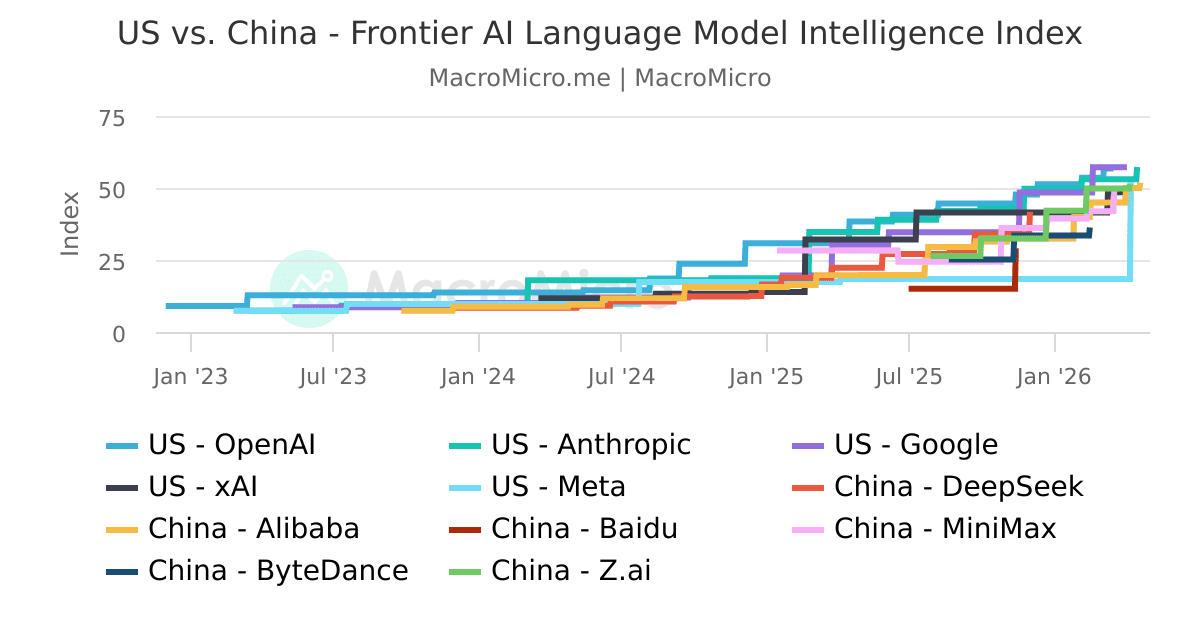

The geopolitical context and industry warnings surrounding these findings are becoming increasingly urgent. The White House previously accused Chinese entities of running these types of "industrial-scale" distillation campaigns in late April. These warnings suggest that the activity is not just the work of independent hackers, but is often linked to larger strategic goals. The goal is clear: parity with Western AI at any cost, regardless of international copyright or service agreements.

Anthropic itself has been proactive in identifying these threats. As early as February, the company disclosed that it had identified and shut down approximately 24,000 fraudulent accounts. These accounts were not just individual users trying to save a few dollars; they were systematically linked to prominent Chinese AI labs. The scale of the botting and account creation required to maintain these proxy networks is massive, requiring constant rotation of IPs and payment methods to stay ahead of security filters.

The involvement of major labs suggests a top-down approach to bypassing international restrictions. When a lab cannot legally buy the compute or the API access it needs, it turns to these proxy networks to fill the gap. This creates a persistent game of cat-and-mouse between US-based AI safety teams and the developers of these illicit transfer stations.

Chinese Labs Target Frontier AI Models

The specific labs mentioned in connection with these activities include DeepSeek, Moonshot AI, and MiniMax. These are not small startups; they are some of the most well-funded and technically capable AI firms in China. The fact that thousands of fraudulent accounts have been linked to these specific entities highlights the desperation to access frontier models. These labs are under immense pressure to produce results that rival GPT-4 and Claude 3, and the API proxy scheme provides a shortcut to that goal.

By using these proxies, these labs can test their own models against the industry leaders in real-time. They can see where their models fail and where Claude excels, then use those specific data points to fine-tune their own algorithms. It is a highly effective, if unethical, way to accelerate development. This creates a significant challenge for companies like Anthropic and OpenAI, who must now balance the need for open access with the necessity of protecting their models from being "drained" by competitors.

As the race for AI supremacy intensifies, these grey markets will likely become even more sophisticated. We are seeing the birth of a new kind of cyber-warfare, one where the ammunition is not malware, but the very prompts and outputs that define modern intelligence. The battle for the API is just beginning, and the stakes involve the very future of how AI is developed and controlled on a global scale.

The security landscape for frontier AI will likely shift toward mandatory hardware-level verification to prevent bulk account creation. Expect to see Anthropic and its peers implement more aggressive geofencing and biometric "proof of personhood" for API access in high-risk regions. The cat-and-mouse game between illicit transfer stations and AI safety teams will define the next two years of global tech policy.

Frequently Asked Questions

What is an API proxy scheme?

It is an illicit network where "transfer stations" resell access to premium AI models like Claude at a fraction of the cost, often while stealing user data.

Are these AI proxy networks safe to use?

No, because these networks harvest your prompts and outputs to sell as training data, posing a significant risk to your privacy and intellectual property.

Which Chinese labs were linked to these fraudulent accounts?

Research from Anthropic and other bodies has specifically identified accounts linked to DeepSeek, Moonshot AI, and MiniMax.

Confirmed details first, useful context second. This is the quickest path to the source trail and the next pages worth opening.

Source date: May 9, 2026