OpenClaw Agents Reshape Apple Hardware Demand

The transition from Intel to Apple Silicon was heralded as a masterstroke of vertical integration, but the recent surge in localized AI processing is exposing the limitations of a closed ecosystem. High-end Mac Studio and Mac Pro configurations are currently experiencing unprecedented lead times, driven largely by the emergence of OpenClaw, a sophisticated AI agent framework that requires massive pools of unified memory to function efficiently. As these autonomous agents become central to professional workflows, the demand for 128GB and 192GB RAM variants has outstripped Apple’s current manufacturing capacity, forcing a reevaluation of how the company handles high-performance compute.

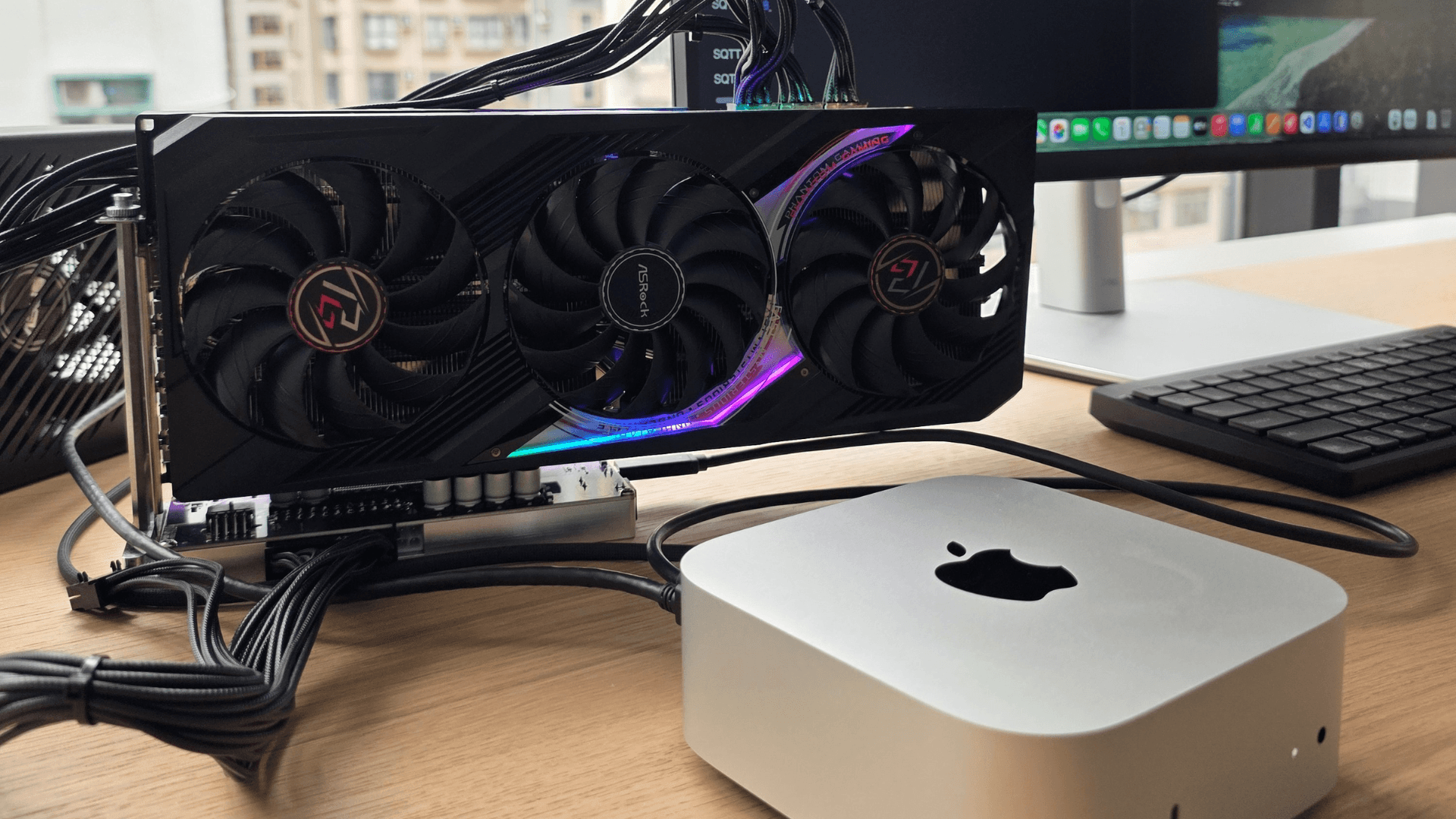

Why this matters: For PC builders and hardware enthusiasts, Apple's rumored pivot toward Nvidia eGPU support represents the first major admission that internal silicon scaling cannot keep pace with the exponential growth of AI-driven graphical requirements. By potentially opening the gates to external GeForce hardware, Apple is attempting to bridge the gap between its power-efficient architecture and the raw TFLOPS throughput found in dedicated desktop GPUs.

Nvidia eGPUs Challenge Unified Memory Architecture

The technical friction between Apple Silicon and Nvidia hardware centers on memory management. Apple’s M-series chips utilize a Unified Memory Architecture (UMA), where the CPU and GPU share a single pool of high-bandwidth LPDDR5X memory. This provides incredible efficiency for tasks like video rendering but creates a ceiling for AI models that exceed the physical capacity of the SoC. Nvidia’s Ada Lovelace architecture, conversely, relies on dedicated GDDR6X VRAM, which offers superior raw speed for the CUDA cores that dominate the AI landscape. The re-introduction of eGPU support would require a massive overhaul of the macOS kernel and the Metal API to handle the latency introduced by the PCIe bus.

Benchmarks suggest that while an M3 Max can deliver roughly 14 TFLOPS of FP32 performance, an RTX 4090 dwarfs this with over 82 TFLOPS. For users running OpenClaw agents, the bottleneck isn't just the compute speed; it is the sheer volume of parameters that must be held in memory. While Apple’s 192GB unified memory is impressive, it operates at a lower bandwidth compared to a multi-GPU Nvidia setup. The value-per-dollar proposition is shifting; professional users are finding that a $6,000 Mac Pro cannot match the AI training speeds of a $3,000 custom PC equipped with dual GPUs, leading to the current push for external hardware compatibility.

Thunderbolt 5 Bandwidth Solves Latency Issues

The primary technical hurdle for eGPUs has always been the bottleneck of the connection interface. Thunderbolt 3 and 4 were limited to 40Gbps, which effectively neutered high-end cards like the RTX 30-series or 40-series by restricting data transfer between the card and the CPU. However, the introduction of Thunderbolt 5, based on the USB4 Version 2.0 specification, changes the calculus. With up to 120Gbps of Bandwidth Boost, the interface finally provides enough headroom to make an external Nvidia 4090 viable for Mac users without the 20-30% performance penalty seen in previous generations.

This hardware shift coincides with Apple’s recent 3nm manufacturing challenges at TSMC. The move to the N3B and N3E nodes has been plagued by yield issues, contributing to the "AI boom strain" mentioned in recent supply chain reports. By offloading the heaviest graphical and AI workloads to an Nvidia eGPU, Apple can reduce the thermal and manufacturing pressure on its own silicon. This allows the M-series chips to focus on what they do best: high-efficiency per-watt performance and low-latency system tasks, while the external "heavy lifters" handle the brute-force TFLOPS required by modern AI agents.

Cupertino Supply Chains Face AI Pressure

The economic impact of this shift is already visible in Apple’s pricing strategy. Recent price hikes on high-memory configurations and the discontinuation of certain entry-level Mac Studio models reflect a pivot toward a more expensive, enterprise-focused hardware stack. As OpenClaw and similar tools become industry standards, the "prosumer" market is being squeezed. Enthusiasts who once bought a base-model Mac Studio for creative work now find themselves priced out as Apple prioritizes the high-margin, high-spec units that AI developers crave.

Thermal efficiency remains the one area where Apple maintains a clear lead. An M3 Max under full load consumes significantly less power than an equivalent Nvidia setup, making it ideal for compact workstations. However, in the world of AI agents, power efficiency often takes a backseat to total time-to-completion. If an Nvidia-powered eGPU can finish a task in half the time, the power draw becomes a secondary concern for professional labs and studios. This reality is forcing Apple to rethink its "silicon-only" stance to prevent a mass exodus of developers to the Windows and Linux ecosystems.

Future Scaling and Expert Forecast

The integration of external Nvidia hardware will likely force Apple to redesign its thermal management systems for the next iteration of the Mac Studio. As AI agent demand scales, expect Apple to prioritize enterprise-level silicon allocations over consumer-grade inventory. This shift suggests a future where the Mac is no longer a standalone workstation but a modular hub for heterogeneous compute.

Frequently Asked Questions

Will Nvidia eGPUs work on current M3 MacBook Pro models?

Compatibility would likely require a macOS update and Thunderbolt 5 hardware to avoid significant performance bottlenecks. Current M3 chips lack the driver support for Nvidia’s proprietary CUDA architecture.

Why is OpenClaw causing Mac supply shortages?

OpenClaw requires high VRAM capacity, leading to a massive spike in orders for 128GB+ Mac Studio configurations. This surge has exhausted Apple's current inventory of high-bin M2 and M3 Ultra chips.

Is an M3 Max faster than an RTX 4090 for gaming?

No, the RTX 4090 offers significantly higher TFLOPS and dedicated ray-tracing hardware. While the M3 Max is more power-efficient, it cannot match the raw frame rates of Nvidia's flagship desktop card.

Tags : #Apple #Nvidia #eGPUs #AIBoom #MacSupplyStrain

Confirmed details first, useful context second. This is the quickest path to the source trail and the next pages worth opening.

Source date: April 5, 2026